Photo: Corporal Annabelle Marcoux, Canadian Armed Forces

Sailor watching BLACKHORSE a CH-148 Cyclone Helicopter flight away from HMCS St. John’s during OP REASSURANCE, on October 9, 2025.

Max Hlywa is a Defence Scientist at Director General Military Personnel Research and Analysis (DGMPRA) within the Department of National Defence (DND) in Ottawa, where he has worked since receiving his MA in Psychology in 2011 from Carleton University. His research has focused on organizational effectiveness; more specifically the development and use of organizational performance measurement frameworks to articulate organizational strategies and communicate results at both the departmental and divisional levels. He has received multiple awards for his work, including the Public Service Award for Excellence in Profession for his contributions to the Sexual Misconduct Research Program and the DM/CDS Team Impact Award for his contributions to the Defence Workplace Well-being Survey.

Dr. Krystal K. Hachey is a Defence Scientist with Director General Military Personnel Research and Analysis (DGMPRA), within the Department of National Defence (DND). She holds a M.Ed. in educational psychology from the University of Saskatchewan and a Ph.D. in measurement and evaluation from the University of Ottawa. Dr. Hachey conducts scientific research examining the relationships between culture, ethos, professionalism, performance, and leadership effectiveness in the Canadian Armed Forces.

Samantha Urban is a Defence Scientist at Director General Military Personnel Research and Analysis (DGMPRA) within Canada’s Department of National Defence (DND), in Ottawa. She received her MA in Sociology in 2005 from Queen’s University. Her recent research focus has been on developing performance measurement frameworks in support of eliminating harmful and inappropriate sexual behaviour as well as integrated complaint and conflict management.

One of the goals of organizational research is to develop decision-support tools for managing the performance of organizations.Footnote1 One such tool can be developed through a process called performance measurement, and this article describes the application of that process to the Canadian Armed Forces’ (CAF) Military Personnel System (MPS). Also covering some of the lessons learned in the process, the article should be of interest to readers curious about how such systems and the organizations that manage them measure and report on their performance.

The Department of National Defence (DND) and the CAF have one of the largest departmental budgets in the Canadian government, much of which is allocated to CAF personnel. It is therefore important that the MPS is efficient, effective, and aligned with Canadian strategic priorities for defence. The main challenge in developing a Performance Measurement Framework (PMF) for such a system is identifying the best evidence that the system is working as intended. Unlike for-profit private firms, which have traditionally measured their performance in terms of profit,Footnote2 the performance of a military personnel system must address a variety of outcomes, some of them less tangible or straightforward, such as personnel readiness and well-being. In the past, uncertainty about what exactly to measure contributed to a reliance on the loosely related data that was at hand.Footnote3 Such practices are particularly inadequate since the Government of Canada’s 2016 Policy on ResultsFootnote4 has called for increased transparency around the delivery of results by each department. Espousing the tenets of ‘deliverology’—a results-oriented approach to managing governments,Footnote5 the policy aimed to define and improve departmental outcomes through the creation and ongoing use of Departmental Results Frameworks.Footnote6 As institutional capacity to meet the demands of the policy did not immediately exist, a new (at the time) project to develop a PMF for the MPS would better position the department to report on the generation, support, and management of military personnel. By establishing detailed articulations of the relevant organizational strategies where they did not previously exist, the project provided the clarity and opportunity needed to develop metrics grounded in specific activities, the outputs they produce, and the logical outcomes.

The project began with the establishment of a stepped process to develop the PMF. The first step in that process was to create a visual overview of the mission for the military personnel system and its various objectives. The next steps included the identification of key performance questions pertaining to each objective, the use of logic models to describe how different organizations within the system are intended to work, and the development of relevant metrics (i.e., output measures and outcome indicators). Some consideration was also given to a communication platform suitable for reporting on organizational performance to the intended audiences. In this article, the authors describe the lead-up to the project, the process that was followed, and some examples from each step in the process. Also included are some lessons that could assist with related future efforts.

The Military Personnel System Performance Measurement Framework Project

Prior to 2014, there were several measurement and reporting systems related to CAF personnel. However, these systems were often inconsistent, incoherent, or lacked explicit alignment with organizational mandates and strategies. Altogether, they were incapable of sufficiently monitoring or reporting on the results of the variety of organizations included in the MPS for the CAF. Consequently, in 2014 CAF senior leadership initiated an unprecedented project to create an authoritative framework spanning the entire military personnel system that would enable performance reporting, support evidence-based decision making, and inform strategic planning.Footnote7

To put it differently, while researchers have described a variety of reasons for measuring organizational performance,Footnote8 there were two main purposes for the project. The first purpose was to enable upward reporting of performance to the relevant authorities and out to external partners, stakeholders, and other interested parties. The second purpose was to enable performance management—as the saying goes, “what gets measured gets managed.”Footnote9 By identifying areas of strength and areas needing improvement, the framework was intended to highlight specific opportunities to focus management efforts on improving results. In addition to these two main purposes, five other specifications were that the PMF should:

-

reflect the DND and the CAF's mandate and align with strategic direction;

-

assess performance at both the strategic (e.g., meeting legislated requirements) and operational (e.g., meeting recruiting targets) levels;

-

include measures related to outputs (e.g., counts of tangibles produced), outcomes (e.g., indicators suggesting desired effects), and efficiencies (e.g., cost per product unit);

-

encompass the right measures (not merely those that are convenient); and

-

leverage a combination of organizational data and member feedback.Footnote10

Two phases were planned for the project. Phase 1 consisted of the research and development of the PMF, led by researchers at Director General Military Personnel Research and Analysis (DGMPRA). Phase 2 involved the implementation, operation, and maintenance of the framework going forward, led by staff at Chief Military Personnel (CMP). As will be seen, Phase 2 was to include the establishment of a software-based communication platform that could provide on-demand situational awareness on the performance of the military personnel system.Footnote11

The Performance Measurement Framework Development Process

The PMF development process established by the project team was based on best practices from the relevant literature,Footnote12 expert advice from CAF members experienced in such processes, and guidelinesFootnote13 put forth by the Treasury Board of Canada Secretariat. The simplest description of the process can be summarized in two parts. The first part of the process (steps 1 to 4 below) is articulating the strategy through which the organization strives to achieve its mission. In other words, it is about explaining how the organization intends to work. The second part (step 5) identifies data that can be used to demonstrate that the organization is working as intended. Overall, this approach aims to “rationalize the programmatic structure as a prelude to measurement.”Footnote14 It would be difficult to assess the performance of an organization without a clear understanding of its intended results and a rational explanation of how it intends to deliver those results. The process also gives some consideration to the communication organizational performance (step 6). More specifically, the steps in the process are as follows…

Steps 1 and 2: Develop a Strategy Map and Establish Strategic Objectives

The “strategy map” is a one page visual that expands upon the organization’s mission, featuring strategic objectives for its different functional areas.Footnote15 Typically informed by strategic-level documents (e.g., mandate, doctrine, legislation, policy), the strategy map connects the organization’s mission to foundational elements that drive and support it, as well as to the ultimate goal that it pursues through the fulfilment of the mission.Footnote16

Step 3: Develop Key Performance Questions

Key performance questions (KPQs) help an organization identify the aspects of performance that are critical to the achievement of its different objectives (identified in the previous steps). KPQs focus the discussion on the most relevant aspects of performance and help management identify the best data for evidence-based decision-making. The development or revision of KPQs can also help ensure that an organization is not using an outdated perspective on the essential aspects of its business.Footnote17

Step 4: Build Logic Models

Logic models are used both inside and outside of government organizations to depict the ways in which a program contributes toward its intended outcomes. In the MPS PMF project, logic models were created to show how different organizations within the MPS achieve the objectives articulated in their own strategy maps. Table 1 summarizes the six main components of a logic model: inputs, activities, outputs, direct outcomes, intermediate outcomes, and ultimate outcomes.Footnote18

| Inputs | The resources used by an organization to conduct its business (e.g., human and financial resources); may also include relevant direction, requirements, and/or constraints facing an organization, as well as outputs or outcomes from other logic models |

|---|---|

| Activities | How an organization produces their outputs (e.g., processes) |

| Outputs | Tangible products resulting from activities (e.g., documents) |

| Direct Outcomes | Effects resulting directly from one or more outputs (e.g., the target audience is informed) |

| Intermediate Outcomes | Effects that direct outcomes contribute to (e.g., some desired behaviour in that audience) |

| Ultimate Outcomes | Effects that intermediate outcomes contribute to (e.g., some desired state in an organization or in some population) |

Step 5: Determine Key Performance Indicators

Key performance indicators are used in the process to demonstrate organizational performance in two ways. First, they answer specific KPQs by pointing to relevant data (see Table 2 for an example). Secondly, they form evidence of results described in logic models—either as measures of output, or as indicators of intended outcomes. In either case, they were explicitly tied to what was produced in the earlier steps of the process (i.e., KPQs and/or logic models). As highlighted in the specifications for the MPS PMF listed above, there was a need for the framework to concentrate on the right aspects of performance, but also to include a complimentary balance between indicator types. For example, the project team aimed to balance indicators based on subjective data (e.g., survey research) with related indicators based on objective data (e.g., administrative data), and balance measures of output produced against indicators suggestive of the related outcomes.Footnote19

Step 6: Develop a Communication Platform

The final step in the project was to develop a software solution for displaying all of the relevant performance information. There was a need for the solution to be easily modified in accordance with changes to the MPS, and to present performance information that is up-to-date. Such a platform would enable performance management and assist with the timely fulfilment of reporting requirements.Footnote20

Notably, steps 1-6 in this process were meant to be cyclical. It was intended that each step of the process would periodically be revisited (e.g., every few years) to ensure that it accurately reflects the natural evolution of the MPS in response to internal changes in policies or programs and external factors including trends in society, technology, environment, economy, or politics.

The Military Personnel System Performance Measurement Framework Strategy Map

The first step in the PMF development process was to create a strategy map that encompassed the entire MPS. This was accomplished using feedback from key representatives and stakeholders throughout the system, as well as strategic documents describing the higher level aims of the system.Footnote21 The goal was to identify the objectives of each functional area and to make it obvious which organization within Military Personnel Command was responsible for each objective. An outdated model of CMP’s functionsFootnote22 from 2008 was used as the base structure in the development of the MPS PMF Strategy Map.

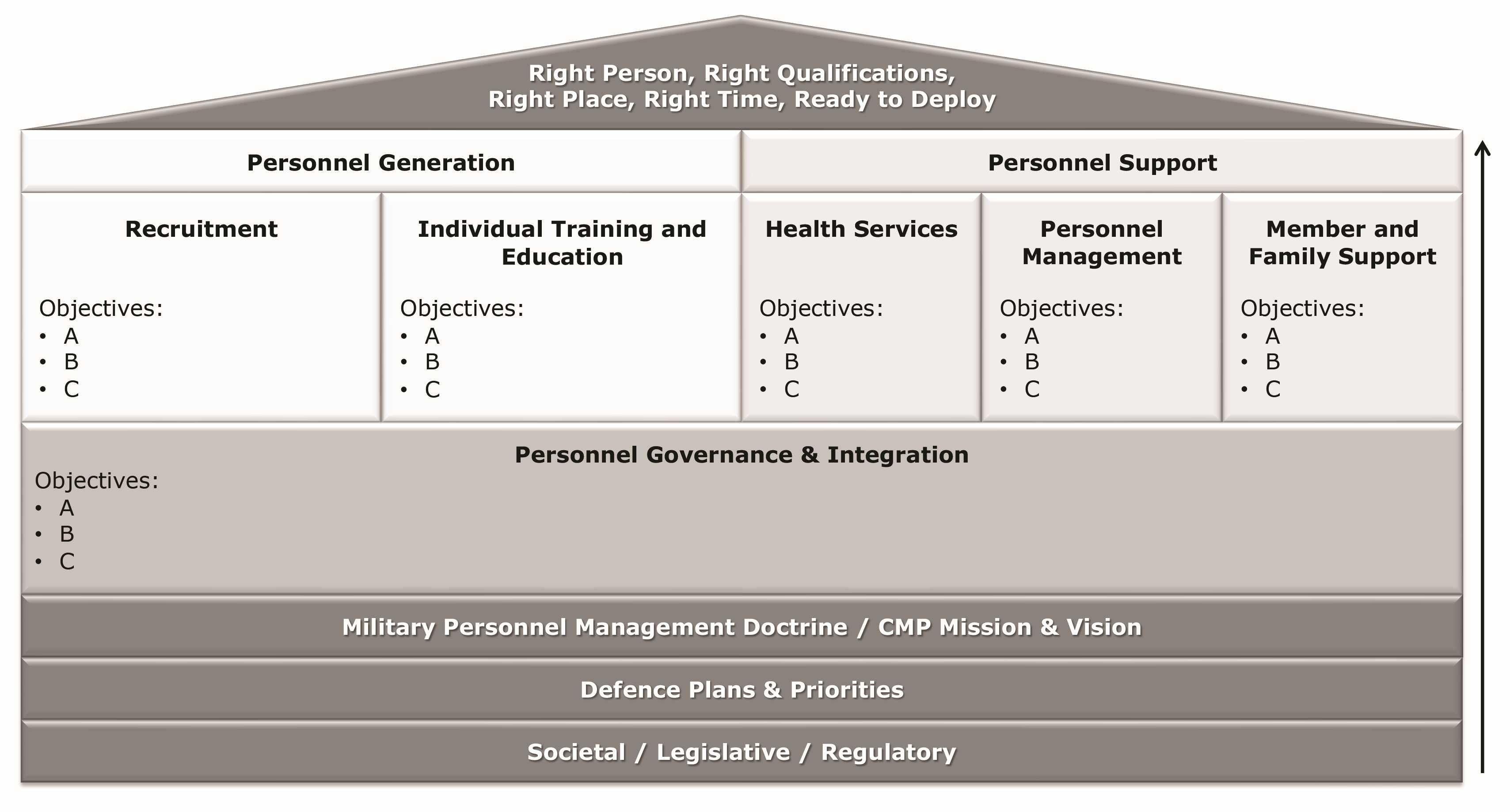

Figure 1 is a simplified version of the MPS PMF Strategy Map, with some details removed. The map is meant to be read from the bottom to the top, with the elements at the bottom supporting those above.Footnote23 The foundational elements at the bottom were the relevant policies, functions, and knowledge base relevant to the MPS. The categories Societal, legislative, and regulatory refer to the governmental priorities and other external conditions affecting the system. Defence plans and priorities referred to relevant DND/CAF plans and priorities. Military personnel management doctrine and CMP mission and vision refer to the specific guiding principles for the MPS. Above these foundational layers sat personnel governance and integration, which referred to important MPS functions affecting the whole system such as research, policy, and financial planning.Footnote24 The two sections above that were personnel generation, which covered recruitment, individual training, and education, and personnel support, which covered health services, personnel management, and member and family support.Footnote25 At the top of the map was the ultimate goal for the MPS—to produce the “right person,” with the “right qualifications,” in the “right place,” at the “right time,” who was “ready to deploy.”Footnote26

Figure 1: The MPS PMF Strategy Map (Simplified)Footnote27

Once the MPS PMF Strategy Map was developed, CMP then directed over a dozen subordinate organizations (responsible for different sections of the strategy map) to create their own performance measurement frameworks, following DGMPRA’s process for PMF development.Footnote28 This was to enable a more detailed articulation of relevant efforts and results, and increase the precision with which different elements of the MPS could be measured. However, this direction also diverted the process away from summarizing the strategy and results of the MPS as a whole. It is important to consider the end user(s), their different purposes, and what level of detail is sufficient for those purposes when deciding at what organizational level to proceed with PMF development. The following section provides insight into the products that emerged from just one of the organizations in the MPS, namely Military Personnel Generation Command (MILPERSGEN).

Example: Military Personnel Generation Command

MILPERSGEN remains responsible for recruitment, training, and education.Footnote29 In accordance with the PMF development process, the first step was to develop the MILPERSGEN PMF Strategy Map.Footnote30 This was accomplished using key strategic documentsFootnote31 and the expertise of knowledgeable senior staff members.

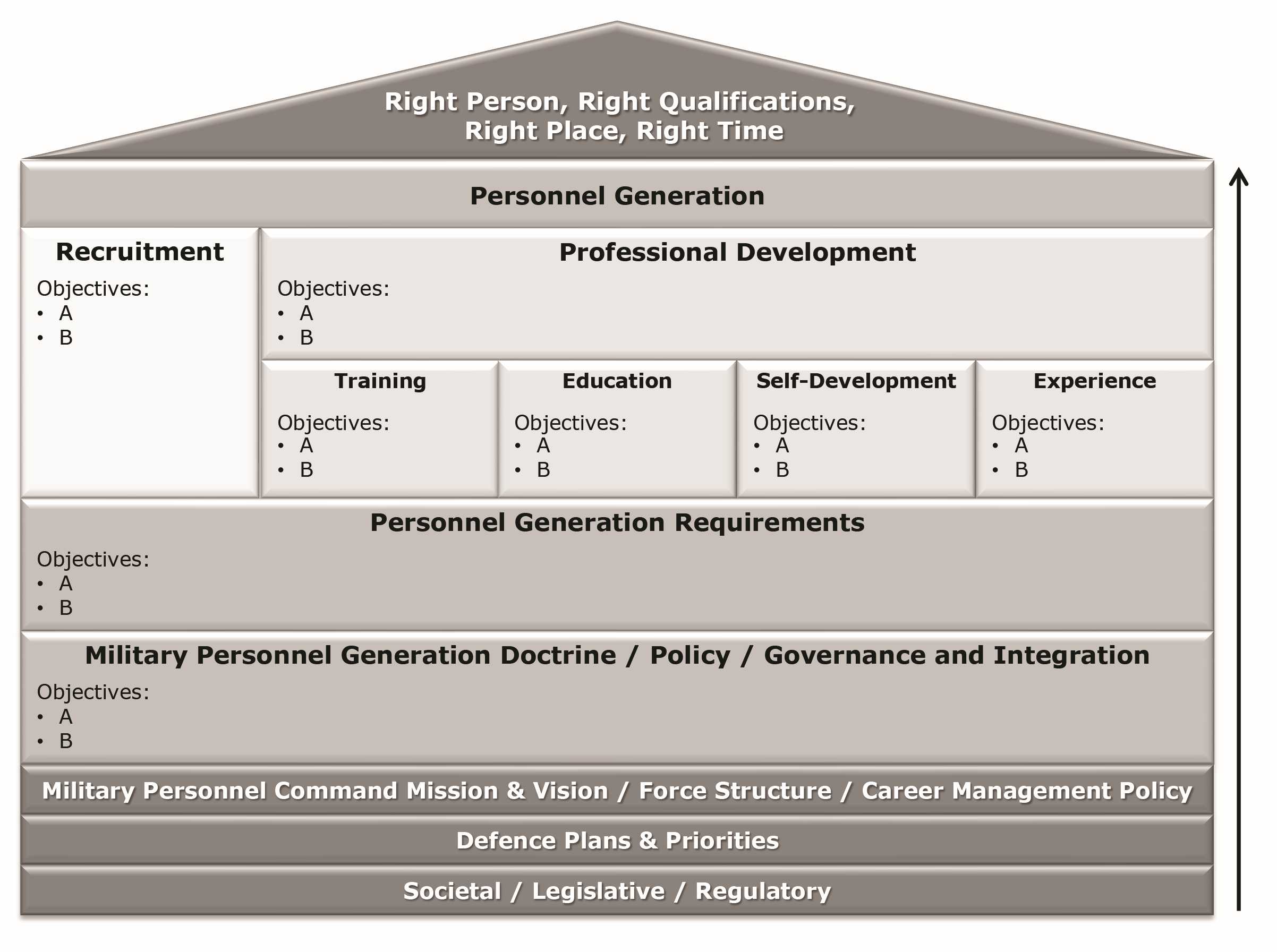

Figure 2 is a simplified version of MILSPERSGEN PMF Strategy Map. Similar to the map developed for the broader MPS, its foundational elements included societal, legislative, and regulatory influences, Defence plans and priorities , and the MPC mission and vision.Footnote32 The MILPERSGEN strategy map also included specific foundations, namely force structure and career management policy.Footnote33 Four main sections were also identified for the strategy map: recruitment, professional development (which had four sub-sections), personnel generation requirements, and MILPERSGEN doctrine, policy, and governance and integration. The following paragraphs provide an example of the strategic objectives, key performance questions, logic models, and key performance indicators that were developed for just one section of the MILPERSEGEN strategy map.

Figure 2: The MILPERSGEN PMF Strategy Map (Simplified)Footnote34

The recruitment section encompassed attracting, processing, selecting, and enrolling/transferring personnel (i.e., both external recruitment and internal transfer of existing CAF members). As an example, Table 2 lists one of the strategic objectives proposed for recruitment, one of the key performance questions focused on the attraction aspect of the objective, and a relevant key performance indicator. In most cases, one to three questions were created for each objective, and one to three indicators were developed to answer each question.

| Objective | Meet the dynamic personnel generation needs of the CAF by attracting, processing, selecting, and enrolling quality external applicants. |

|---|---|

| Question | How effectively does the external attraction process meet the needs of the CAF? |

| Indicator | The number of external applicants received for processing. |

A logic model was also developed for each objective, and Table 3 provides an example from the logic model developed for the attraction objective. It shows just one of the inputs for a single activity, one of the outputs produced, and some of the outcomes to which that output theoretically contributes. Establishing measures of such outputs and identifying data that could be used as evidence of such outcomes was another way that key performance indicators were developed for each of the organizations in the MPS.

| Input | Potential applicants |

| Activity | Recruiting activities (e.g., career fairs) |

| Output | Applicants |

| Direct Outcome | Public interest in employment opportunities in the CAF |

| Intermediate Outcome | Increased pool of quality applicants |

| Ultimate Outcome | CAF occupational requirements are met |

Over a dozen organizations in the MPS applied this method to their PMF development process, which helped each of them explain their mission in terms of the objectives for different functional areas and to identify the best data to use to answer key performance questions related to those objectives. It also helped each organization articulate their organizational strategy, and the evidence ideally demonstrating that their strategy is working.Footnote37 By identifying and monitoring the relevant key performance indicators, those organizations were better positioned to manage and report on their results.

Communicating Organizational Performance

The MPS PMF was meant to be an authoritative resource for senior leadership, providing both at-a-glance information about the overall performance of the MPS, and more detailed information on specific aspects when needed. Relating to the final step in the PMF development process, the following section describes certain requirements and best practices for communications solutions that could provide such breadth and depth for reporting organizational performance.

Communication Platform RequirementsFootnote38

The ideal means to maximize accessibility and to preclude the need for specialized software on the user’s end was a web-based platform. There was a requirement for this web-based platform to embody user-centred design,Footnote39 usability heuristics,Footnote40 persuasive technology,Footnote41 and best practices in the presentation of visual information.Footnote42 There was also a requirement for the platform to be expandable and collapsible, affording both broad and deep views of MPS performance. Other requirements were related to the thoughtful stewardship of the underlying data—performance-related data coming from a variety of organizations that document and store information independently from one another. There was a requirement that the platform could be easily modified, as it was expected that the MPS would evolve over time in response to the constant shift of internal and external factors. A final requirement was for permission controls for different user profiles (e.g., those who maintain the platform versus those who simply want to view it).

Best Practices in Communicating Organizational Performance

Researchers have found that the best practices for communicating organizational performance include cascading scorecards and interactive modular dashboards, described as follows.Footnote43 Performance scorecards offer a quick overview of all the performance critical to an organization’s strategic objectives in a single location.Footnote44 By using a table or spreadsheet, scorecards list many performance indicators sorted by the objective, goal, purpose, or function to which they relate. Each column represents a different property belonging to the indicators. These properties typically include past, present, and target performance levels, with some colour coded shapes to signal current performance against expected level of performance. For example, a red circle may be used to indicate poor performance.Footnote45 Scorecards were popularized by The Balanced Scorecard, a specific type of scorecard that categorizes indicators across four different perspectives on the organization.Footnote46 In a similar fashion, a scorecard for reporting MPS performance could categorize indicators (e.g., by functional area).Footnote47 The cascading structure offered by such an expandable—collapsible scorecard would provide a great communication platform for a PMF.Footnote48

Performance dashboards differ from performance scorecards in that a dashboard depicts a variety of information in several graphs and charts.Footnote49 Presenting the results in different ways can consolidate disparate but complementary performance-related information from sources throughout a system or an organization, giving them great potential as management tools.Footnote50 Researchers have noted that the best dashboards are modular, allowing different displays that are easily swapped in or out, and interactive, preferably through direct manipulation at the surface level instead of through layered menus.Footnote51

The project team envisioned a large cascading scorecard allowing users to navigate through the different elements of the MPS and discover performance information relevant to each strategic objective. Specifically, it was proposed that each row of the scorecard would display the status of a particular indicator, and that clicking on an indicator would take the user to a dashboard displaying a variety of information or metadata related to that indicator.Footnote52

Lessons

A few of the lessons emerging from the MPS PMF development process have informed other applications of the process and could be useful to anyone employing similar processes.

Attitudes toward Performance Measurement

The project team learned that discussing the performance of their organization made some representatives apprehensive about the purpose of the task, anxious about its complexity, and uncertain about how to proceed. It was therefore important to frame this activity as a valuable opportunity for each organization to clarify its strategy and results for those less familiar with the organization. Moreover, it was important to explain that identifying unsatisfactory performance is the first step in bringing about improvements. When individuals saw the process as an opportunity to communicate the organization’s success, and a mechanism through which results could be improved, they were more engaged in the process and the tasks involved.Footnote53

Sustaining Agendas and Change Agendas

An important distinction that emerged repeatedly during the development of the MPS PMF was that between an organization’s sustaining agenda and its change agenda, as well as the understanding of how they are connected. The sustaining agenda reflects the day-to-day efforts of the organization against its enduring mandate. It references on-going roles or responsibilities that have existed for some time and are intended to continue indefinitely.Footnote54 The change agenda, on the other hand, typically introduces a new strategy or initiative related to the organization’s purpose. Since the change agenda is often meant to improve, transform, or expand upon the sustaining agenda, the lifespan of that agenda (and thus, the measurement of its performance) typically ends once the improvement, transformation, or expansion is complete. Organizational representatives were at times eager to focus on the change agenda for their organization and had to be reminded that the exercise was mainly intended to capture the performance of the sustaining agenda for the MPS, a good example of which is the personnel generation function.Footnote55

The Importance of Logic Models

In most applications of the process, the development of logic models was the most essential step. By identifying the inputs that go into specific activities, by precisely describing the outputs produced, and by clearly expressing the intended outcomes of those efforts, logic models provided an important tool for each organization to clarify how exactly it is meant to work. The agreed-upon understanding that resulted simplified the identification of the most relevant performance measures. Logic models were also useful communication tools for those less familiar with the operations of each organization.Footnote56

One lesson specific to the development of logic models is the importance of assumptions inherent to the strategies expressed in the models. Put simply, logic models suggest that producing certain outputs will result in certain outcomes, and that those outcomes will contribute to other outcomes. A logic model contains many such expectations, and any one of those expectations can carry assumptions that are not exactly true. In such instances, the integrity of the logic can break down and certain organizational outcomes may no longer be reasonable. By acknowledging and validating the assumptions most critical to the expectations expressed in its logic model, an organization can discover and correct certain dysfunctions that are impeding its success.

Another lesson related to logic model development has to do with the appropriate expression of the outcome hierarchy. An organization requires a sense of humility to accurately portray the diminishing influence it has on the expected chain of outcomes from its efforts, as well as to acknowledge the increasing influence that external factors play. However, doing so can be an important apparatus for clarifying accountability and managing expectations around results. While the scope of the project to develop the first PMF for the MPS prevented the inclusion of such details, it was learned that the inclusion of assumptions and external factors in the relevant logic models would make for a more robust framework.Footnote57

Internal Capability for Performance Measurement

As described earlier, the requirement for government departments including the DND/CAF to measure and report on their contribution to the country exceeds anything that existed previously. Additionally, leaders of organizations are increasingly relying on data driven decision-making and results-based management tools. Since organizational performance measurement can meet both needs, the demand for such work has increased throughout DND and the CAF. However, increases to the resources, such as staff, with the tools, training, and experience to meet the demand has not kept pace. For example, not all MPS organizations had the resources needed to implement the PMF that was developed, and only some of those organizations were able to commit the additional resources necessary. Shortages in capability and resources to meet expectations for performance measurement and reporting were raised at the 2023 Performance and Planning Exchange in Ottawa. There, leaders from Treasury Board Secretariat acknowledged suggestions presented by public service professionals from different departments, including the addition of relevant training at the Canada School of Public Service and the provision of direction and resources to integrate performance measurement with planning efforts (e.g., strategies and business plans) at all levels of the department. Challenges and limitations also exist within and between levels of the department regarding the availability, integrity, and meaningful expression of all the relevant data. After all, critical to any performance measurement process is the quality of the underlying data. As such, by allocating and prioritizing resources (e.g., staff) towards performance data stewardship, there is an opportunity for departments to better monitor their effectiveness and demonstrate their value.

Photo: Corporal Brendan Gamache, Formation Imaging Services

Members of HMCS Ville de Québec wave goodbye to HMS Prince of Wales as the ships conduct a PassEx, before HMCS Ville de Québec departs the UK-led Carrier Strike Group, during Op HORIZON, in the Sea of Japan, on September 12, 2025.

Summary and Conclusion

This article described the process that was used to develop a performance measurement framework (PMF) for the military personnel system (MPS) of the CAF. A description of each step in the process, examples of results from each step, and several important lessons from over a dozen applications of the process were presented. As anticipated, the MPS has continued to evolve since the completion of this work. As suggested, revisiting and updating the PMF will support the management and reporting of the various results produced by the system—results that are particularly important at a time when the DND/CAF has prioritized the reconstitution of the forces.Footnote58 Increasing the resources available for the development, implementation, and use of such frameworks throughout the department will support the Defence Team’s ability to deliver results that Canada and its allies depend on.